The era of the blank check for Artificial Intelligence is hitting a wall of cold, hard math. While Silicon Valley evangelists continue to preach about an infinite growth curve, the boardrooms of the Fortune 500 are starting to ask a dangerous question: where is the money? For the past two years, the global tech industry has operated on the assumption that massive capital expenditure would inevitably translate into massive revenue. That assumption is now being interrogated by reality.

The projected plateau in AI spending is not a sign of the technology failing. It is a sign of the market maturing past its honeymoon phase. Companies that spent 2023 and 2024 stockpiling H100 chips and hiring every machine learning engineer with a pulse are now staring at balance sheets that don't add up. We are moving from the "build it and they will come" phase to the "show me the return on investment" phase. If the returns don't materialize in the next eighteen months, the current surge in infrastructure spending will face a correction that will make the 2000 dot-com crash look like a minor accounting error. You might also find this connected coverage insightful: The $4.6 Billion Ghost in the Machine.

The Infrastructure Trap

The current spending frenzy is driven largely by the fear of missing out. This is a classic psychological trap in corporate cycles. Hyperscalers like Microsoft, Google, and Meta are locked in an arms race, spending tens of billions of dollars per quarter on data centers and specialized hardware. They have to. If they stop, they cede the future to their rivals.

However, this creates a massive disconnect between the suppliers of AI—the chipmakers and cloud providers—and the consumers. The companies actually buying these services to improve their businesses are finding that the "intelligence" they are purchasing is expensive to run and difficult to integrate into existing workflows. As extensively documented in latest coverage by CNET, the results are worth noting.

Consider the energy problem. Every new iteration of a large language model requires exponentially more computing power. This translates into a physical requirement for electricity that our aging power grids simply cannot handle. When a tech giant wants to build a new five-gigawatt data center but the local utility company says it will take eight years to upgrade the lines, the spending hits a physical ceiling. You cannot spend your way out of a lack of copper and transformers.

The Productivity Paradox

We were promised that AI would automate the mundane and supercharge the creative. So far, the results are mixed at best. In the legal and financial sectors, firms have spent millions on "copilot" technologies only to find that the human oversight required to check for hallucinations negates much of the time saved.

For a mid-sized enterprise, the cost of an AI seat—often $30 per user per month—adds up quickly. For a company with 10,000 employees, that is an extra $3.6 million a year on top of their existing software stack. Unless that company can prove that the software is saving them at least $3.6 million in labor costs or generating that much in new business, the subscription will be the first thing cut when the CFO looks for "efficiencies" during the next quarterly review.

The hype cycle ignores the friction of implementation. It is easy to show a demo of an AI writing a blog post. It is incredibly difficult to build an AI that can handle a complex, multi-step supply chain optimization problem without making a catastrophic error. Companies are realizing that the "last mile" of AI integration is the most expensive and the least automated part of the process.

The Looming Hardware Glut

History tells us that every period of over-investment is followed by a period of over-supply. During the late 1990s, telecommunications companies laid thousands of miles of fiber optic cable that sat "dark" and unused for years. We are seeing the early signs of a similar "dark compute" scenario.

If the demand for AI-generated content or specialized enterprise applications doesn't keep pace with the massive build-out of GPU clusters, we will see a secondary market flooded with discounted hardware. Startups that raised money on the promise of "exclusive access to compute" will find their primary asset devalued. This isn't just a theory; it is the basic law of supply and demand.

The Specialized Model Shift

Smart money is already moving away from the "bigger is better" philosophy. The idea that we can just keep feeding more data into larger models to get smarter results is reaching a point of diminishing returns. The costs are scaling linearly or even exponentially, while the performance gains are starting to flatten out.

Instead, we are seeing a pivot toward Small Language Models (SLMs). These are highly specialized tools trained on specific datasets for specific tasks. They are cheaper to train, cheaper to run, and easier to secure. For a bank, a model that only knows how to detect fraud in its specific transaction types is infinitely more valuable—and cheaper—than a general-purpose model that can also write poetry about the French Revolution. This shift will drastically reduce the total amount of "general purpose" AI spending as companies realize they don't need a nuclear reactor to light a candle.

Data Exhaustion and Legal Walls

The largest models have already "eaten" most of the high-quality public internet data. To get better, they need more data, but that data is increasingly locked behind paywalls or tied up in copyright litigation.

Major publishers, artists, and even social media platforms are closing their doors to the web crawlers that fueled the initial AI boom. If companies have to pay for every scrap of data they use for training, the cost of developing new models will skyrocket. At the same time, the legal risk of using AI-generated output that might infringe on intellectual property is making corporate legal departments very nervous.

A single high-profile copyright ruling could render billions of dollars of R&D worthless overnight. This regulatory and legal uncertainty is a massive drag on long-term spending. No rational CEO wants to commit to a five-year, nine-figure AI strategy if the underlying technology might be declared illegal or require a massive royalty payout three years from now.

The Talent Drain and Reality Check

The astronomical salaries once offered to anyone who could spell "Transformer" are beginning to stabilize. The market is distinguishing between the true innovators and the "prompt engineers" who were simply riding the wave. As the "talent war" cools, the massive personnel costs associated with AI projects will also plateau.

We are entering the "trough of disillusionment." This is a necessary part of any technology cycle. It is where the grifters are weeded out and the real work begins. The companies that survive the coming spending plateau will be the ones that focused on solving specific, boring problems rather than chasing the "God-like AI" narrative.

The Sovereign AI Gamble

The only factor that might delay the plateau is the rise of "Sovereign AI." This is the trend of national governments—particularly in the Middle East and parts of Asia—spending billions to build their own domestic AI infrastructure. These players aren't necessarily looking for an immediate ROI in the way a private company does. They are looking for strategic autonomy and national security.

But even sovereign wealth has its limits. Eventually, these systems must prove their utility. If a nation builds a multi-billion dollar AI cluster that doesn't actually improve the lives of its citizens or the efficiency of its economy, the political will to continue funding it will evaporate.

Practical Steps for the Sidetracked

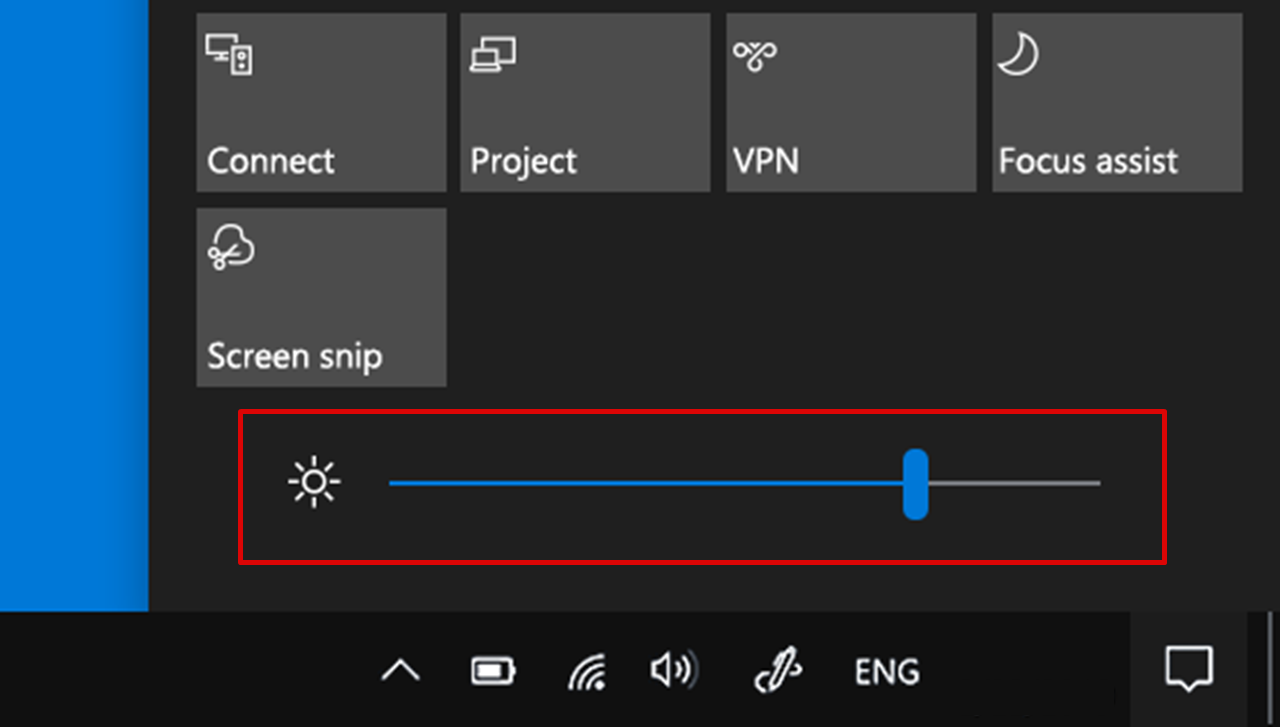

If you are a leader currently navigating this volatility, the path forward requires a ruthless audit of your current projects. Stop looking at AI as a "magic box" and start looking at it as a utility, like electricity or cloud storage.

- Kill the "Experimental" PoCs: If a Proof of Concept hasn't moved to production in six months, kill it. It is a drain on resources and morale.

- Audit Your Data Pipeline: The quality of your internal data is more important than the size of the model you use. Clean data on a small model beats dirty data on a large one every time.

- Demand Vendor Accountability: Do not accept "we are working on it" as an answer regarding hallucinations or data privacy. If a vendor cannot provide a clear Service Level Agreement for the accuracy of their AI output, find a different vendor.

The plateau is not an ending. It is a correction. The companies that thrive will be those that treat AI as a tool for precision, not a shortcut to innovation. The era of easy money is over, and the era of engineering discipline has begun. Move your budget from "exploration" to "execution" immediately or prepare to explain to your shareholders why you spent their money on a digital paperweight.