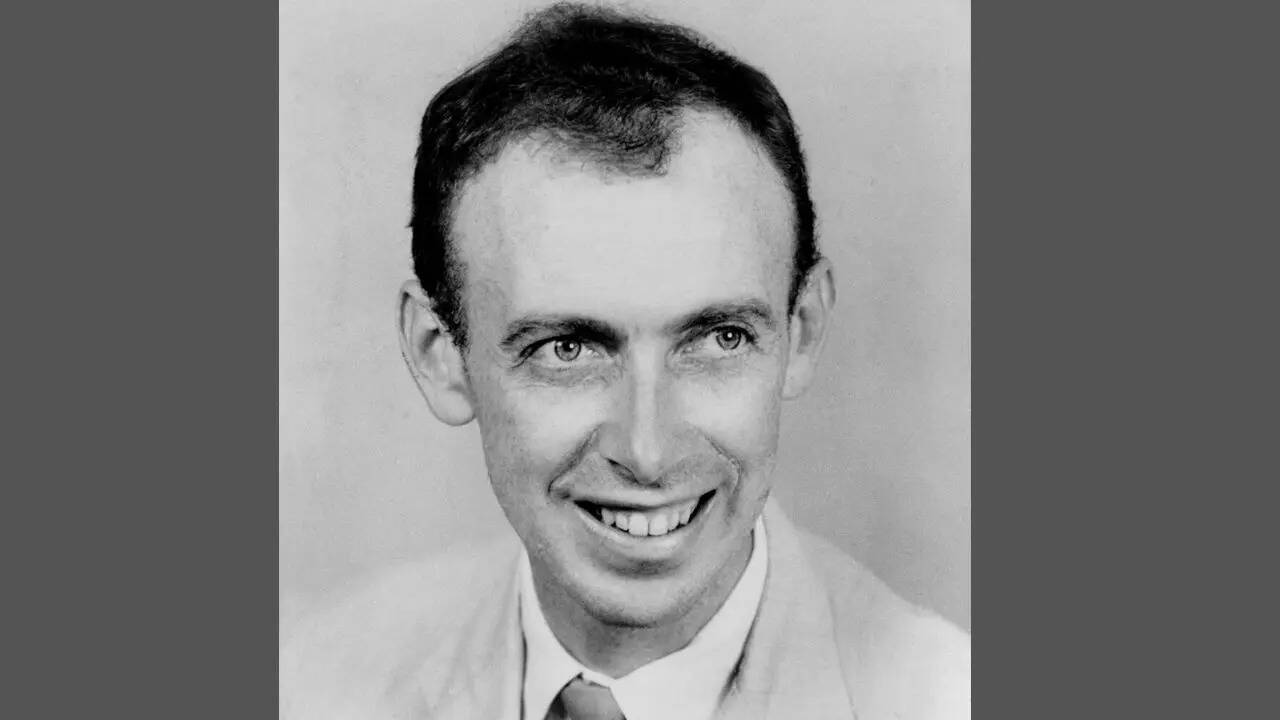

In the high-stakes world of scientific discovery and industrial engineering, there is a recurring trap that claims the brightest minds. We call it the complexity bias. It is the instinctive, almost arrogant belief that a difficult problem requires an equally difficult answer. When James Watson famously remarked that worrying about complications before ruling out simple answers would be "damned foolishness," he wasn't just offering a pithy quote for a coffee mug. He was describing the brutal efficiency that allowed him and Francis Crick to beat better-funded, more established rivals to the structure of DNA.

The most effective leaders don't find answers by building more elaborate models. They find them by stripping away the noise until only the fundamental truth remains. If you cannot explain the failure of a system or the logic of a strategy through basic principles, you probably don't understand the problem yet. Recently making headlines recently: Structural Mechanics and Software Redundancy An Analysis of Tesla Fleet Reliability.

The Arrogance of Complexity

Complexity is often used as a shield. In the corporate world, a manager who presents a 50-slide deck filled with dense jargon and overlapping Venn diagrams is usually hiding the fact that they haven't identified the root cause of a deficit. They lean on "complications" to buy time or to appear indispensable.

True expertise operates in the opposite direction. Further details on this are covered by Engadget.

Take the aerospace industry during the mid-20th century. Engineers were obsessed with heat-shielding materials that could withstand atmospheric reentry. Many teams looked toward exotic alloys and active cooling systems—solutions that were technically "brilliant" but practically fragile. The winning solution? The ablative heat shield. It was a simple slab of material designed to char and fall away, taking the heat with it. It was elegant. It was cheap. Most importantly, it worked because it relied on a basic physical process rather than a complex mechanical one.

We see this same pattern in modern software development. When a system crashes under heavy load, the immediate impulse is often to add more layers: more load balancers, more microservices, more monitoring tools. This usually results in a "death by a thousand cuts" scenario where the system becomes so interconnected that no one person understands how it functions. The veteran analyst knows to look at the database queries first. Nine times out of ten, a single inefficient line of code is the culprit.

Why We Fight Simplicity

If simple answers are so effective, why do we actively avoid them? The answer is psychological.

Human beings are hardwired to equate effort with value. If a problem is "big," we feel that the solution must be "big" to justify our status or our paycheck. To admit that a multi-million dollar bottleneck can be fixed by changing a single procedural step feels like an admission of previous failure.

- The Fear of Looking Unprepared: Simple solutions look easy in hindsight. Before they are proven, they often look "too easy" to be taken seriously by a board of directors or a grant committee.

- The Sunken Cost Fallacy: Organizations that have invested years into a complex methodology will ignore simple alternatives because they cannot stomach the idea that their previous work was unnecessary.

- Intellectual Vanity: High-performers want to flex their mental muscles. Solving a puzzle with a sledgehammer is more satisfying to the ego than turning a key.

Watson’s "damned foolishness" was a warning against this specific brand of vanity. He recognized that the universe is governed by laws that tend toward the most efficient path. When you fight that path, you aren't being thorough; you’re being an obstacle.

The Investigative Audit

When I investigate a failing project, I start by asking for the "Simple Model." If the team can’t produce one, the project is already in trouble.

Consider a manufacturing firm struggling with quality control. They might spend a fortune on AI-driven optical sensors and predictive analytics. But an on-the-ground audit might reveal that the workers on the floor are simply fatigued because the lighting is poor. No amount of neural-network processing will fix a problem caused by a $20 lightbulb.

This isn't a hypothetical. It is the reality of how systems break. The "complications" that we worry about are often secondary effects of a primary, simple failure.

Stripping the Variable List

In data science, there is a concept known as overfitting. This happens when a model is so complex that it starts to account for random noise rather than the actual underlying trend. The model looks perfect on paper, but it fails the moment it hits the real world.

Business strategies suffer from the same flaw. A company that tries to track 50 different Key Performance Indicators (KPIs) is tracking nothing at all. They are drowning in data. The most successful turnarounds usually involve identifying the single lever that moves the needle.

In the 1990s, when Apple was on the brink of bankruptcy, Steve Jobs didn’t save it by adding more products. He saved it by cutting the product line by 70%. He forced the company to focus on four great products instead of thirty mediocre ones. He ruled out the complications and returned to a simple, executable answer.

The Cost of Being Wrongly Sophisticated

There is a distinct financial and social cost to ignoring the simple. When you build a complex solution for a simple problem, you create "technical debt." You now have to maintain that complexity forever. You need specialists to run it, more money to fund it, and more time to explain it to new hires.

If the simple answer was the correct one all along, you have effectively taxed your future self for the sake of looking smart today.

In the medical field, this is often a matter of life and death. Diagnostic errors frequently occur because a physician looks for a rare, "exotic" disease (the zebra) instead of the common ailment (the horse). They worry about the complications—the 1% probability—before they have ruled out the 99% probability. It is a waste of resources and a danger to the patient.

Implementing the Watson Standard

To apply this mindset, you have to be willing to be the most "boring" person in the room. You have to be the one who asks, "What if we just stopped doing X?" or "Does this actually need to be automated?"

- Rule out the basics first: Before hiring a consultant, check the manual. Before upgrading the hardware, optimize the software.

- Challenge the "Expert" Jargon: If someone cannot explain a problem to you without using buzzwords, they are likely lost in the complications.

- Prioritize Visibility: Simple systems are transparent. You can see where they break. Complex systems hide their flaws until they explode.

The next time you are faced with a crisis that seems insurmountable, stop. Put down the spreadsheets. Stop the brainstorming session about "innovative" new frameworks.

Ask yourself if you are being "damned foolish" by ignoring the obvious. The answer is usually sitting right in front of you, disguised as something far too simple to be true.

The hardest part of solving a problem isn't the math or the engineering. It is having the courage to accept a simple answer in a world that rewards complexity. You don't need a more sophisticated plan; you need a clearer lens. Strip away the ego, ignore the noise, and look at the bare bones of the situation. If you can’t find the simple answer there, then—and only then—are you allowed to worry about the complications. Until then, stay focused on the fundamentals. They are the only things that ever truly last.